A programming language for everyone: BASIC

From Feynman to Einstein: a predestined career

Kemeny’s career, however, was certainly not casual. After completing the George Washington High School, in 1943 he went to the Princeton University where he studied mathematics and philosophy. During these years practically everything happened: he stopped studying for a year to work on the Manhattan project together with the future Nobel Prize Richard Feynman. He graduated in 1947 and then during the development of his doctorate he even made the assistant of Albert Einstein. Finally, in 1949, he received his doctorate with his final dissertation on “Type-Theory Vs. Set-Theory “. In 1953 he began working in the Department of Mathematics of Dartmouth University, where he became president from 1970 to 1981. Under his guidance, Dartmouth became a pioneer university in the use of computers and in equating computer literacy with reading literacy.

The use and spread of BASIC

Although the language was already used on several minicomputers, its diffusion was limited by their high cost. The situation, however, changed with the introduction of the first personal computer in history, the MITS Altair 8800 at the beginning of 1975. The diffusion of the PC increased the demand for a programming language within the reach of many and that required small amounts of memory.

But it was with the introduction of the Altair BASIC, an interpreter who resided in only 4 KB of memory written by Bill Gates and Paul Allen, that this language began to spread in a pronounced manner. In 1977, then, 3 major home computers such as the Commodore PET, the Apple II and the Radio Shack TRS-80 chose BASIC as the integrated language in their firmware. This language came back into vogue in the 1990s when Microsoft introduced Visual Basic in 1991. However, it differed very much from the original BASIC and the only point of contact was the similar syntax.

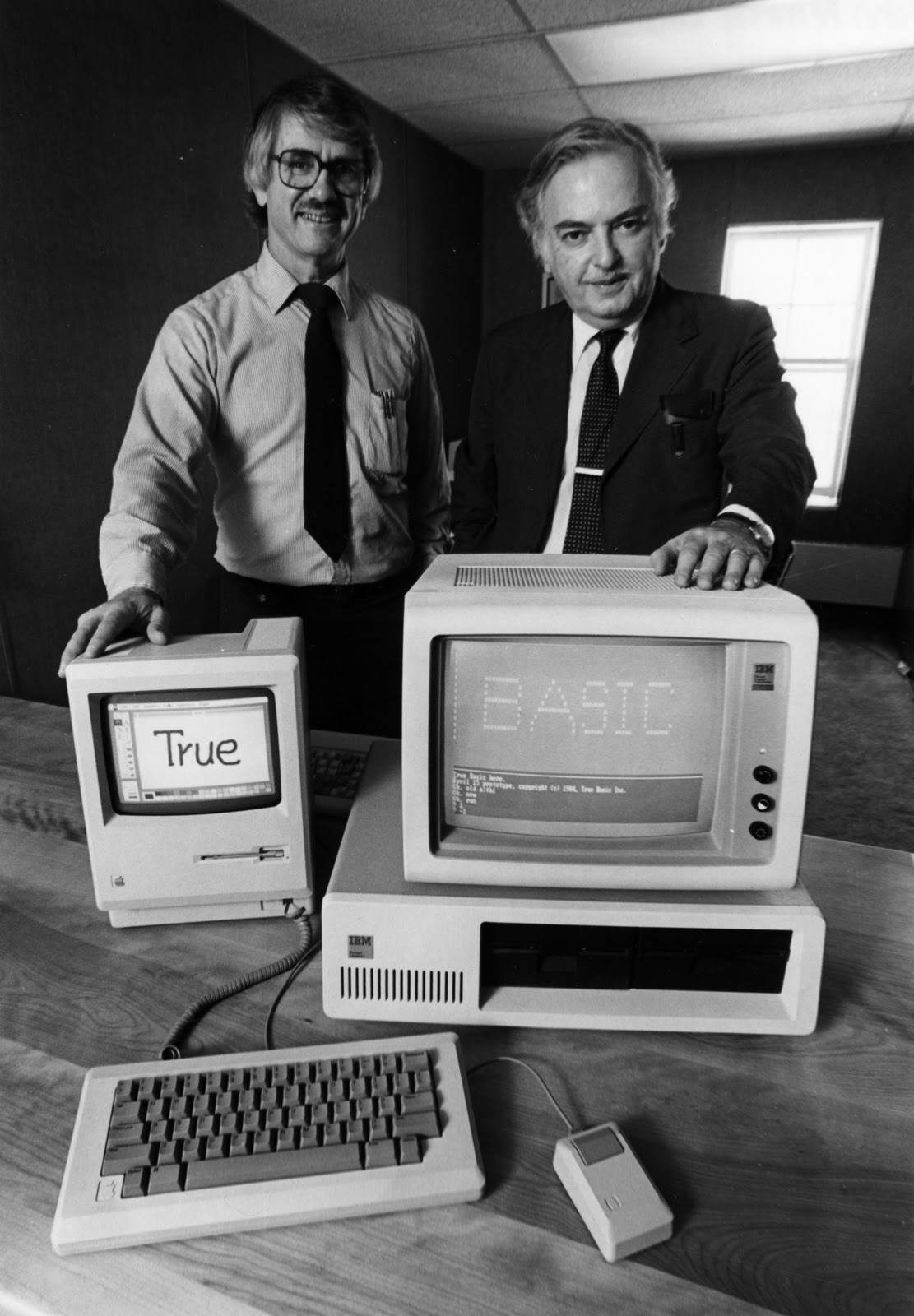

Between past and future, BASIC has made the history of programming. And it as its inventor, John George Kemeny, will forever be imprinted in the history of computing.